Sarang Patil

PhD Student, Department of Data Science, New Jersey Institute of Technology

I am a second-year PhD student in the Department of Data Science at the New Jersey Institute of Technology (NJIT), advised by Dr. Mengjia Xu and a member of the Xu Lab. My research explores how hyperbolic geometry, state-space models, and graph neural networks can be used to build efficient large language models and dynamic graph embeddings.

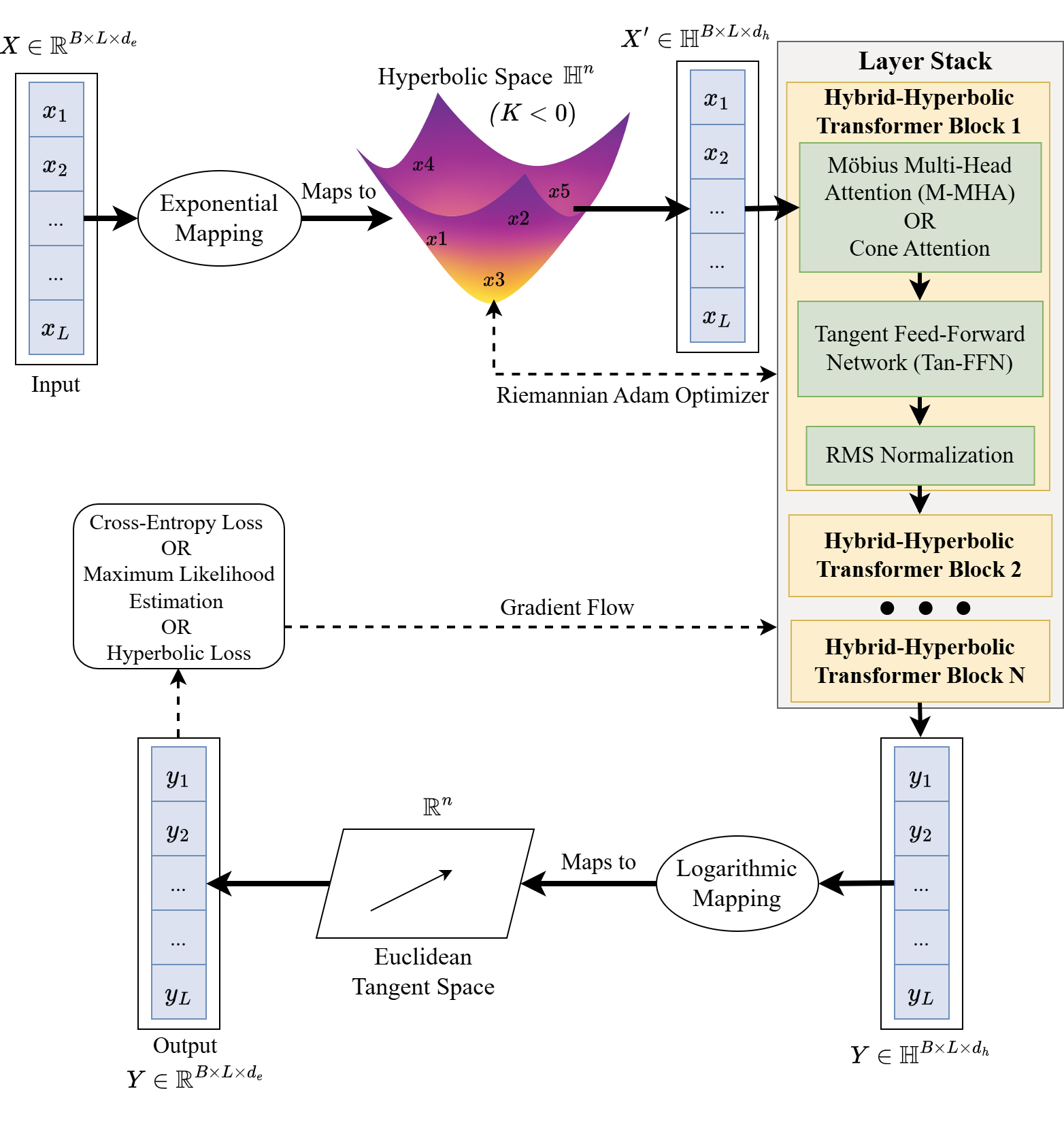

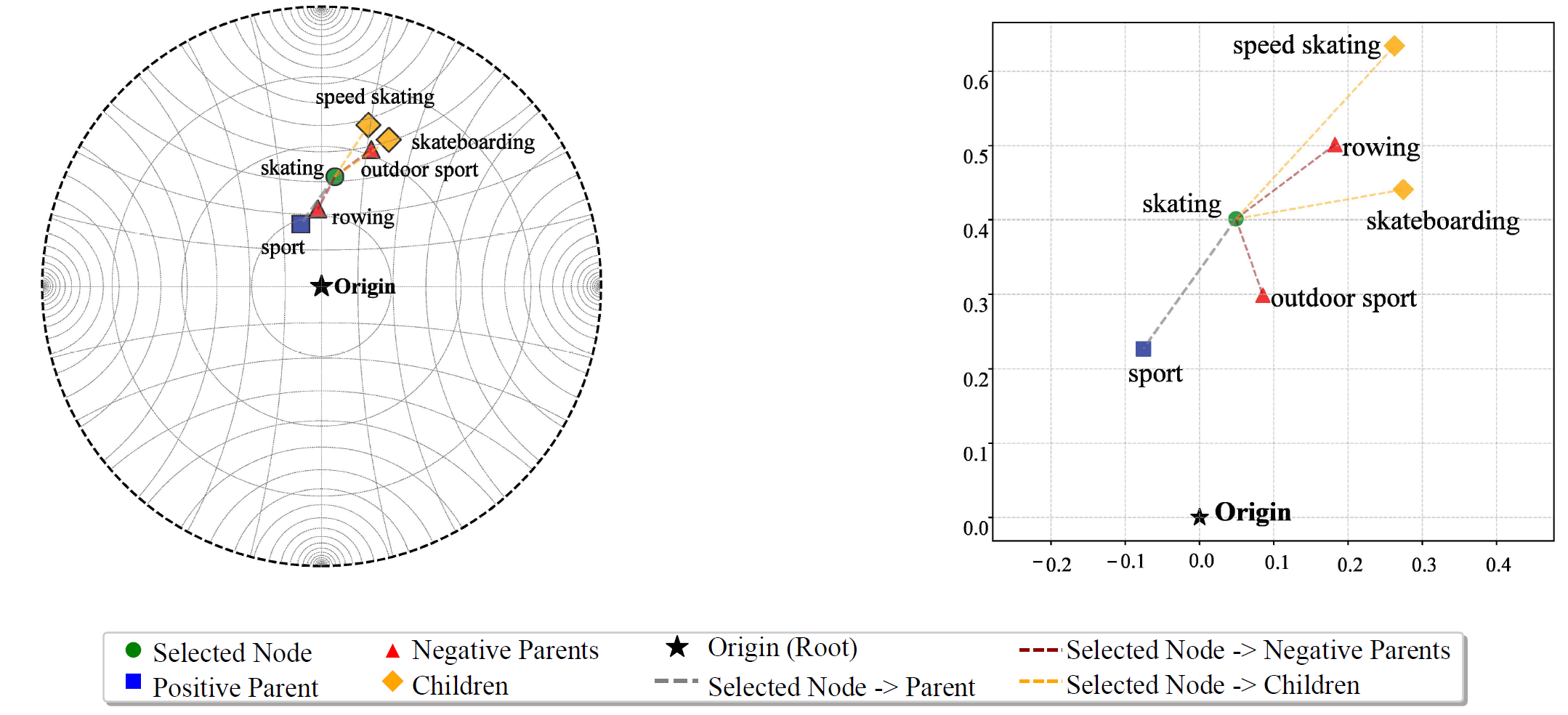

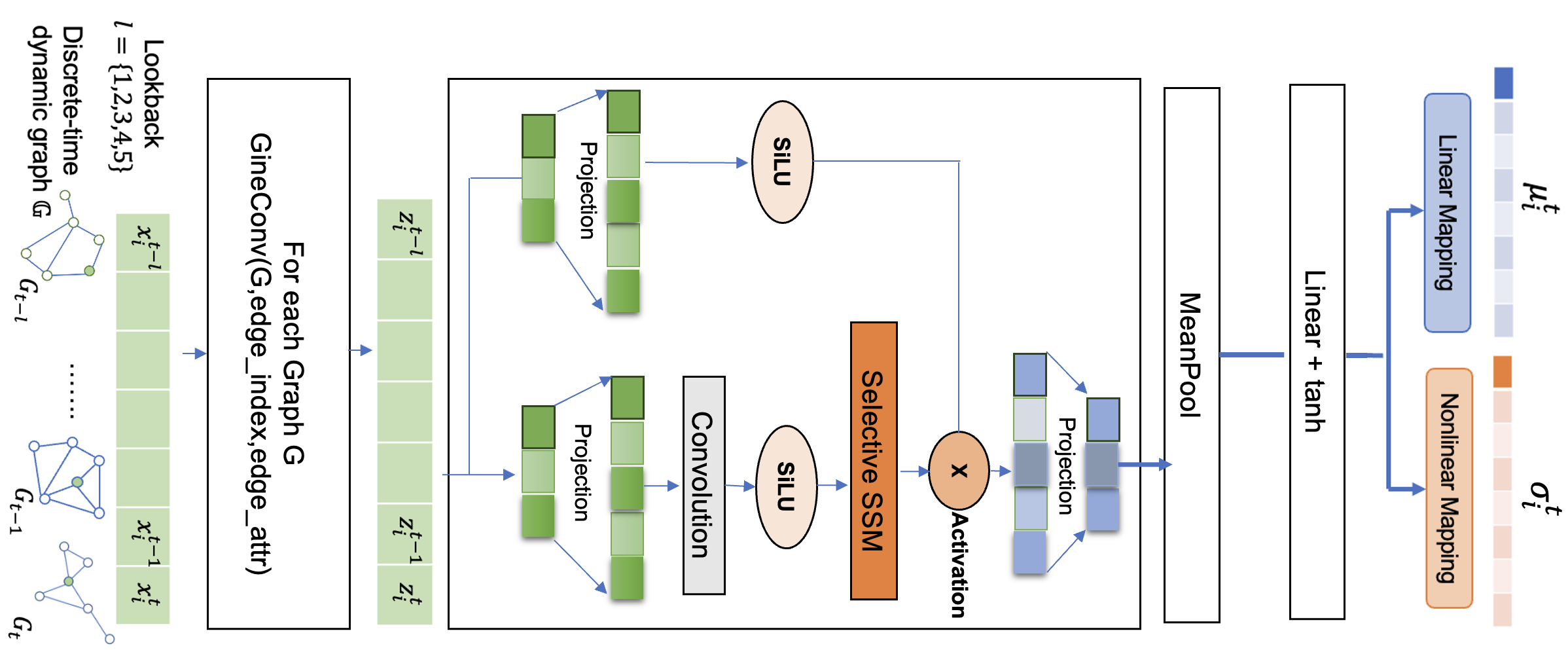

Recent work includes the Hierarchical Mamba framework, which combines efficient Mamba2 state-space models with hyperbolic spaces to capture hierarchical relationships in language data, and a comparative study of dynamic graph embedding approaches using transformers and the Mamba architecture. In addition to these projects, I continue to develop hyperbolic models for other domains, exploring how curvature-aware embeddings can benefit a wide range of applications. I was also involved in surveying, and organizing the rapidly growing body of work on hyperbolic large language models, which forms the basis of my recent survey accepted in SIAM Review.

Outside of research, I enjoy playing chess ♟️, hiking 🥾, exploring new cuisines 🍜🌍, and playing video games 🎮.

Education

New Jersey Institute of Technology

University of Maryland Baltimore County

Savitribai Phule Pune University, India

Interests

- Hyperbolic geometry and non-Euclidean representation learning

- State-space models (SSMs) and efficient sequence modeling

- Large language models and hierarchy-aware embeddings

- Graph neural networks and dynamic graph embedding

- Curvature-aware optimization and geometric deep learning

Experience

Research Assistant, New Jersey Institute of Technology, NJ

Research Assistant, University of Maryland Baltimore County, MD

Data Science Intern, CoReCo Technologies, Pune, India

Project Intern, Aalborg University, Copenhagen, Denmark

Publications

Academic Service

Reviewer:

- Journal: IEEE Transactions on Knowledge and Data Engineering (TKDE)

- Journal: Advances in Space Research (ASR)

- Conference: Association for Computational Linguistics (ACL)

News

- March 26, 2026 (upcoming): Will be presenting at AI Exploration Day HiM paper

- November 24, 2025: Paper accepted in SIAM Review

- October 27, 2025: Presented Hyperbolic Mamba paper at YWCC PhD Student Research Sharing Forum

- October 20, 2025: Passed my PhD qualifying exam

Contact

Email: sp3463@njit.edu

Address: New Jersey Institute of Technology, Newark, NJ 07102